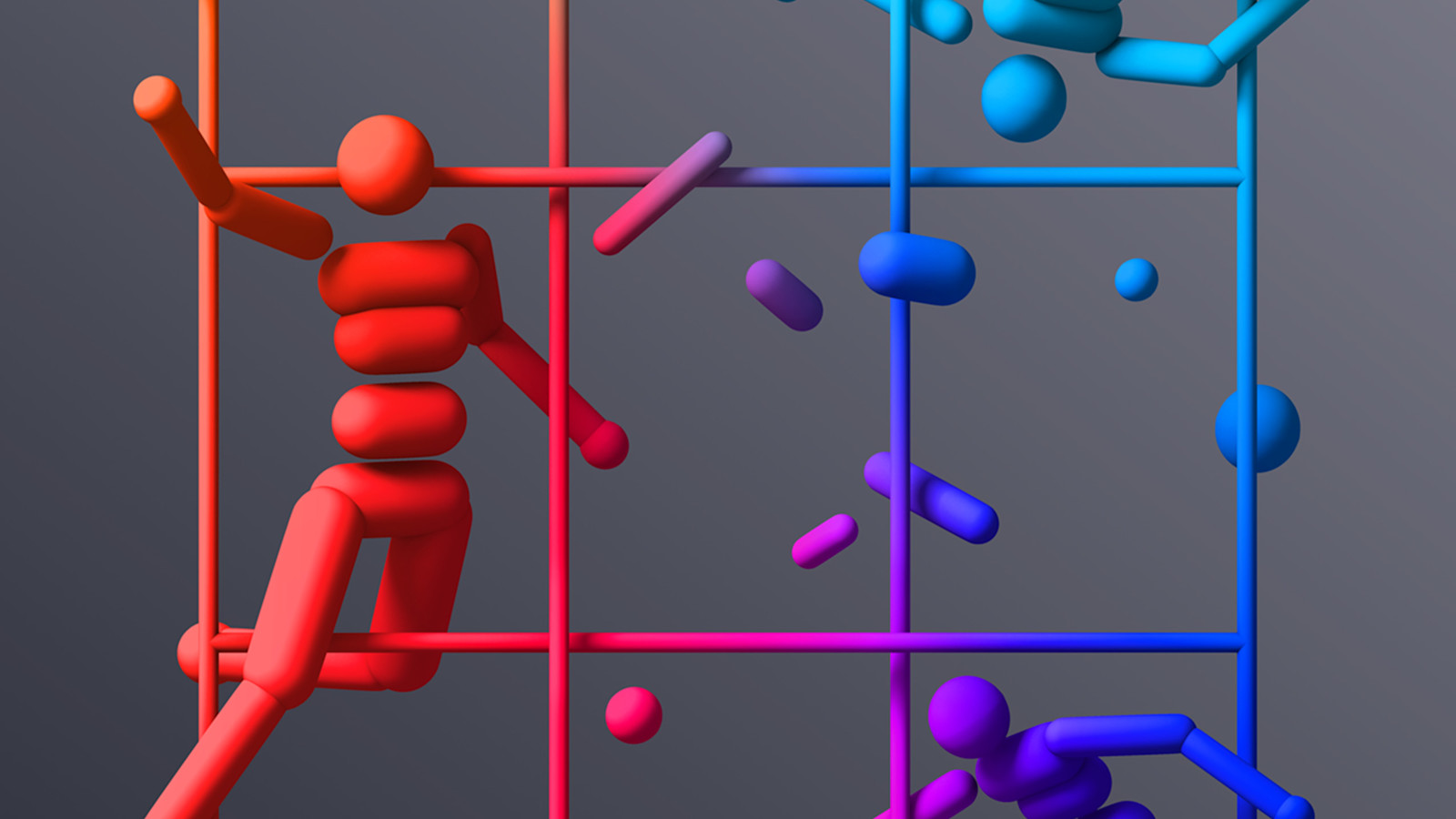

artificial intelligence, a groundbreaking method is emerging that could redefine how machines learn complex physical tasks. Self-play, a concept gaining traction, allows AI agents to develop a suite of physical skills such as tackling, ducking, and faking, all without human-engineered environments dictating every move.

The Self-Play Advantage

Self-play offers a dynamic difficulty curve that adapts in real-time, ensuring that AI systems continually face challenges that are just right for their current level of competence. This adaptability is key. It means that the AI isn't just rehearsing pre-determined tasks but is actively engaging in problem-solving and skill acquisition on the fly.

For instance, AI agents in simulated environments have demonstrated the ability to kick, catch, and even dive for objects like a soccer ball. These aren't skills that were explicitly encoded into their programming. Instead, they were acquired through a process of trial, error, and adaptation. The AI-AI Venn diagram is getting thicker, as these agents learn from the unpredictable dynamics of their own interactions.

Lessons from Dota 2

The implications of self-play have already been showcased in the competitive gaming arena, with notable success in Dota 2. There, AI agents honed their strategies by playing against themselves. The result was an AI capable of competing at a high level against human players. This isn't a partnership announcement. It's a convergence of AI learning methodologies that challenges the traditional ways we've structured machine learning environments.

Given these results, the potential for self-play extends far beyond gaming. Imagine robotics systems that develop tactile skills by interacting with their environments, or autonomous vehicles that refine their navigation algorithms by simulating road scenarios. The compute layer needs a payment rail, and self-play might just be the currency of future AI development.

A Path Forward

But what does this mean for the future of AI development? Should we pivot more resources towards self-play techniques to expedite AI learning across various domains? If agents have wallets, who holds the keys? In a world where machines are endowed with increasing degrees of autonomy, self-play could be the underpinning mechanism that accelerates the transition from programmed behaviors to more agentic, adaptive systems.

The confidence in self-play as a training method is growing. As these techniques mature, they're likely to become central to the AI systems of tomorrow. The question isn't whether self-play will be significant, but rather how soon it will reshape machine learning. We're building the financial plumbing for machines, and self-play is a critical pipe in this infrastructure.