Advancing independent research on AI alignment

OpenAI commits $7.5M to The Alignment Project to fund independent AI alignment research, strengthening global efforts to address AGI safety and security risks.

OpenAI commits $7.5M to The Alignment Project to fund independent AI alignment research, strengthening global efforts to address AGI safety and security risks.

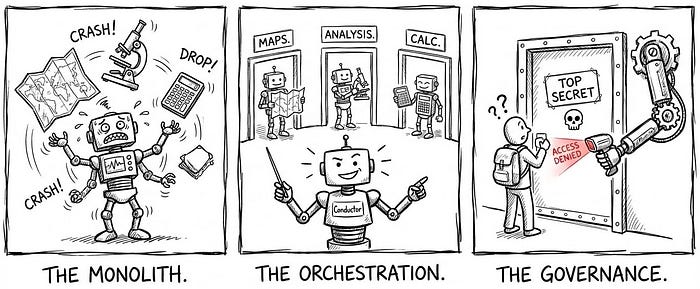

Last Updated on February 19, 2026 by Editorial Team Author(s): Shreyash Shukla Originally published on Towards AI. Image Source: Google Gemini The “Generalist” Ceiling In the previous five articles, we architected a robust single agent. It has memory, tools, and user context. However, as we scale this agent to handle enterprise-grade complexity — such as “Deep-Dive Root Cause Analysis” or “Metric Design” — we hit a hard ceiling: The Generalist Trap. The Constraint A single agent operating on a monolithic system prompt is inherently limited. Microsoft Research notes that while single-agent architectures offer simplicity, they often falter in complex, dynamic environments where distinct, conflicting reasoning paths are required [Single-agent vs. Multi-agent architectures — Microsoft Learn]. When we try to force one agent to be an expert in everything — SQL generation, data visualization, statistical hypothesis testing, and creative metric design — we dilute its performance in anything. The context window becomes crowded with competing instructions, leading to “attention drift” where the model ignores specific constraints. The Bloat (The “One Giant Brain” Problem) From an engineering perspective, a monolithic agent is a liability. Stacking hundreds of tools and thousands of lines of instructions into a single system_prompt creates a "God Object." Debuggability: If the agent fails to write correct SQL, is it because of the SQL instruction or because the new “Creative Writing” instruction confused it? Hallucination: Research on Magentic-One (Microsoft’s Multi-Agent System) demonstrates that monolithic agents struggle with inflexible workflows, whereas encapsulating distinct skills in separate agents significantly reduces error rates [Magentic-One: A Generalist Multi-Agent System — Microsoft Research]. The Solution: The Swiss Army Knife Pattern To break this ceiling, we must transition from a “Worker” model to an “Orchestrator” model. We stop building one giant bot and start building a Team. The Shift: We decompose the monolith into a fleet of Specialist Agents (Sub-Agents). The Orchestrator: The primary agent becomes a router. Its only job is to understand intent and delegate the task to the correct expert. Validation: Industry benchmarks using frameworks like AutoGen show that multi-agent systems can increase accuracy on complex tasks by over 20% simply by allowing agents to critique and refine each other’s work [How Multi-Agent LLMs Differ from Traditional LLMs — Deepchecks]. Image Source: Google Gemini The Specialist Fleet (Process-Based Agents) To break the “Generalist Ceiling,” we adopt a Multi-Agent Architecture. Instead of a single agent attempting to be a “Jack of all trades,” we build a fleet of distinct “Experts.” This approach mirrors the “Agentic Design Patterns” advocated by AI thought leaders like Andrew Ng. His research emphasizes that decomposing a complex workflow into smaller, iterative steps handled by specialized agents (e.g., Coder, Critic) significantly outperforms zero-shot prompting. By isolating roles, we ensure each agent operates within a “perfect context” relevant only to its specific domain, reducing the noise that leads to hallucination [The Batch — Agentic Workflows]. The Roles: Defining the Experts We define three primary Specialist Agents to handle the most complex data workflows. These align with the “Mixture of Experts” pattern often discussed in advanced LLM system design: The Deep Analysis Agent (The Investigator): This agent is optimized for structured, multi-turn data slicing. It does not just “query data”; it guides the user through a hypothesis tree. It asks clarifying questions (“Do you want to slice by Region or Product?”) and suggests dimensions for investigation. The Metric Innovation Agent (The Architect): Designing a new KPI (Key Performance Indicator) requires a different cognitive mode than debugging code. This agent is instructed to act as a “Product Manager,” focusing on business logic, formula stability, and edge-case definitions before any SQL is written. The Exploration Agent (The Scout): This agent is designed for open-ended discovery. It uses “Exploratory Data Analysis” (EDA) techniques to uncover hidden patterns or anomalies in datasets without a predefined user hypothesis. The Orchestrator: The Routing Logic The core of this system is the Orchestrator (formerly the Generalist). It no longer attempts to solve every problem. Instead, it functions as a Router Chain — a concept formalized by frameworks like LangChain [LangChain Router Chains Documentation]. The Listener: The Orchestrator actively analyzes the user’s intent. If a user says, “Why did customer engagement drop last quarter?”, the Orchestrator recognizes this as a “Root Cause” problem. The Handoff: It does not try to answer. Instead, it triggers a handoff tool: transfer_to_agent(agent_name="exploration_agent"). The Result: The user is seamlessly transitioned to the expert agent, which loads its own specialized system prompt and toolset, ensuring the context window is clean and focused. Image Source: Google Gemini The Gatekeeper (Sub-Agent Access Control) In a monolithic system, access control is binary: you are either “in” or “out.” But in an Expert Ecosystem, granularity is essential. Not every user should have access to every specialist. The Risk: A “Sales Program X Agent” might have access to sensitive compensation data. The Standard: We cannot rely on the LLM to police itself (“Please don’t show this data”). According to the OWASP Top 10 for LLMs, “Model Denial of Service” and “Unauthorized Access” must be mitigated by a deterministic control layer, not by prompt engineering [OWASP Top 10 for LLM Applications]. The Mechanism: Middleware Interception To solve this, we implement a Middleware Pattern (specifically, a sub_agent_access module) that acts as a firewall between the Orchestrator and the Sub-Agents. This aligns with the NIST Zero Trust Architecture, which mandates that access to individual enterprise resources must be granted on a per-session basis [NIST SP 800-207: Zero Trust Architecture]. The Enforcement Flow We utilize a Hook System (specifically the before_tool_callback) to enforce these rules. The flow is strictly deterministic: Intercept: When the Orchestrator decides to call transfer_to_agent('Exploration_Agent'), the middleware halts the execution before the tool runs. Verify (RBAC Check): The system retrieves the user’s identity (User ID) and cross-references it against the specific Access Control List (ACL) for the target agent (e.g., Exploration_Agent_Allowed_Users). Enforce: If Authorized: The callback returns None, allowing the tool to proceed and the sub-agent to load. If Unauthorized: The callback raises a PermissionError. The tool call is rejected, […]