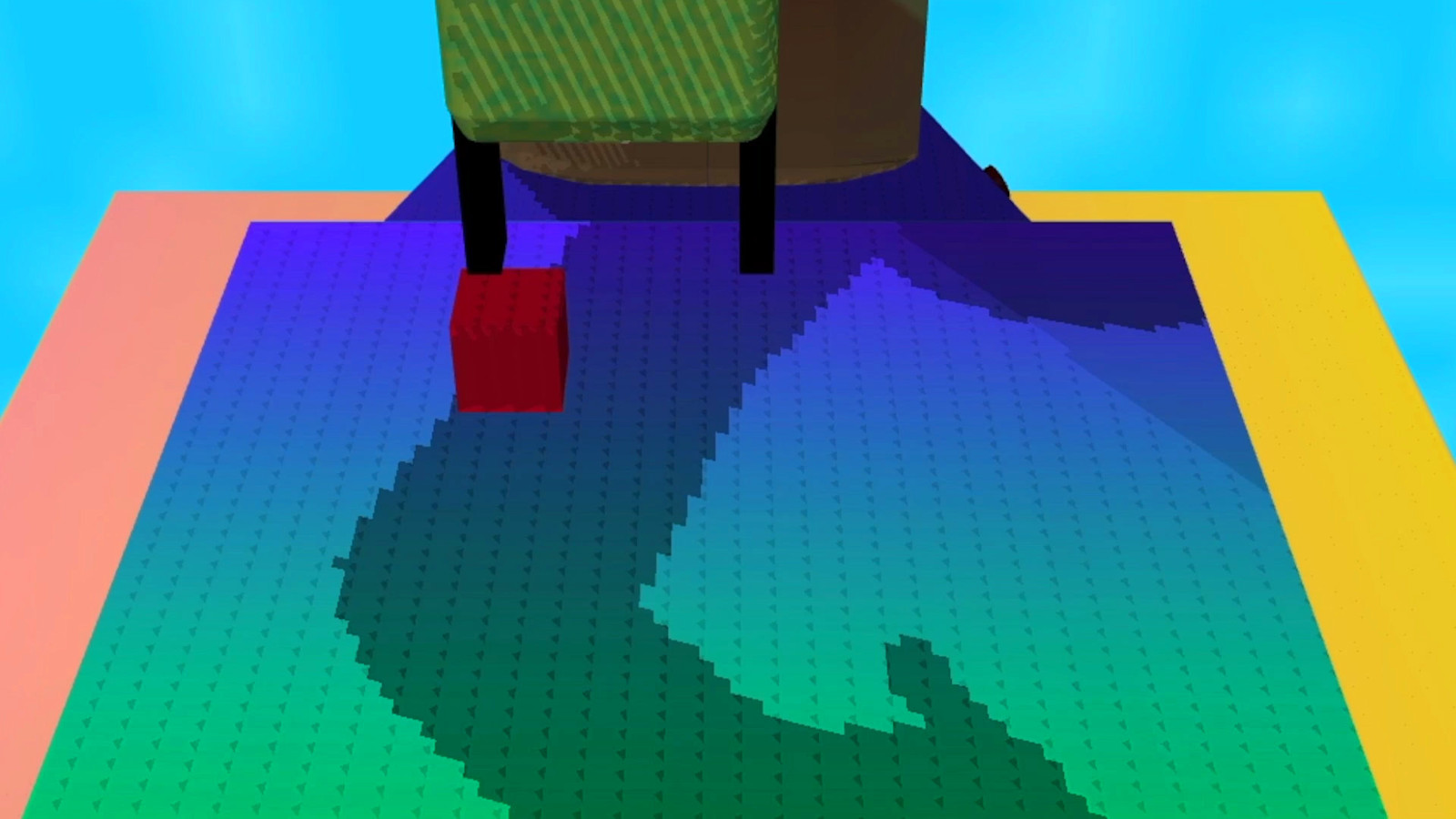

OpenAI has taken a significant stride artificial intelligence by releasing Safety Gym, a comprehensive suite of environments and tools aimed at advancing reinforcement learning agents that adhere to safety constraints during training. But what does this mean for the AI community, and why should we care?

Reinforcement Learning Meets Safety

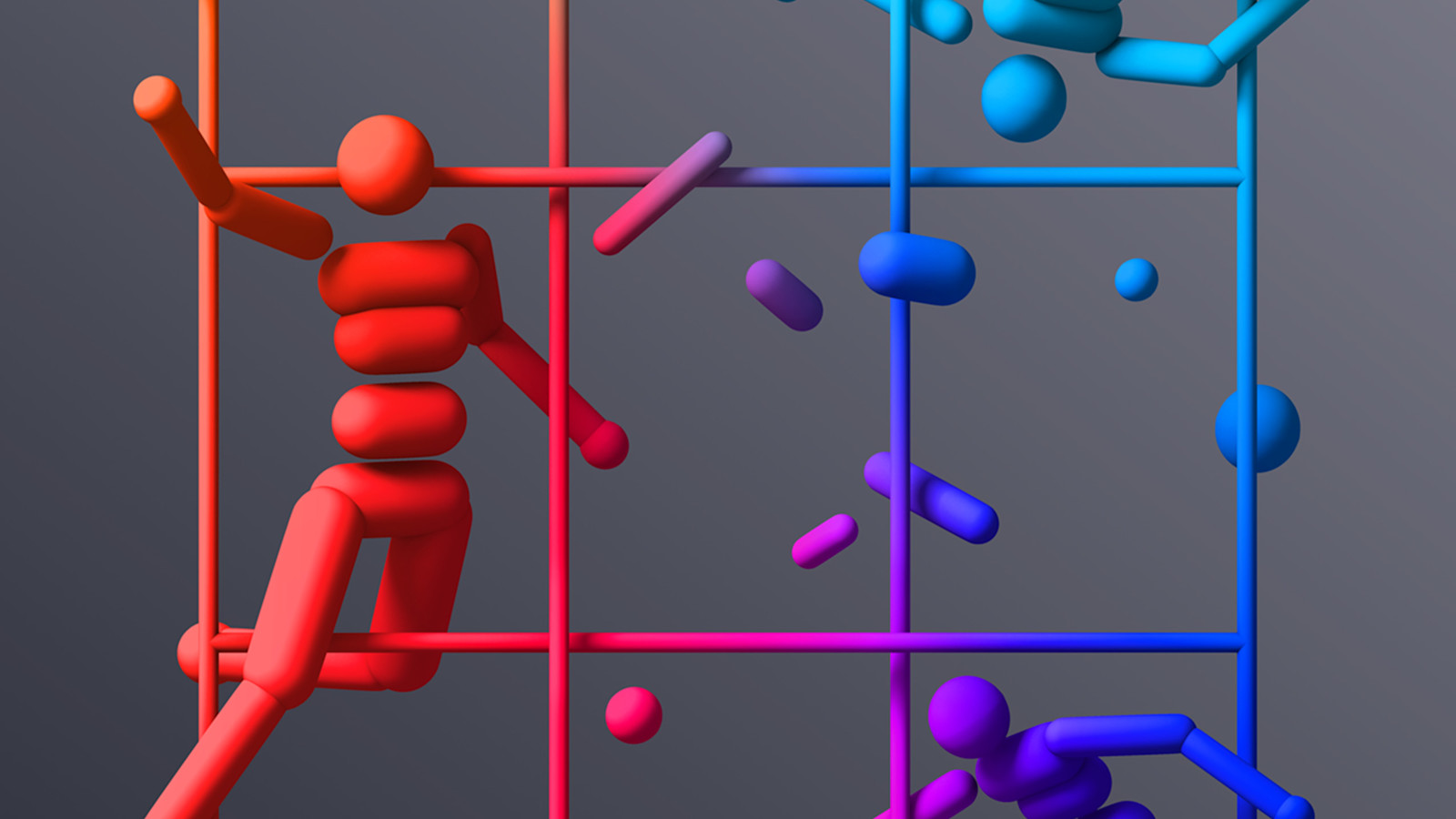

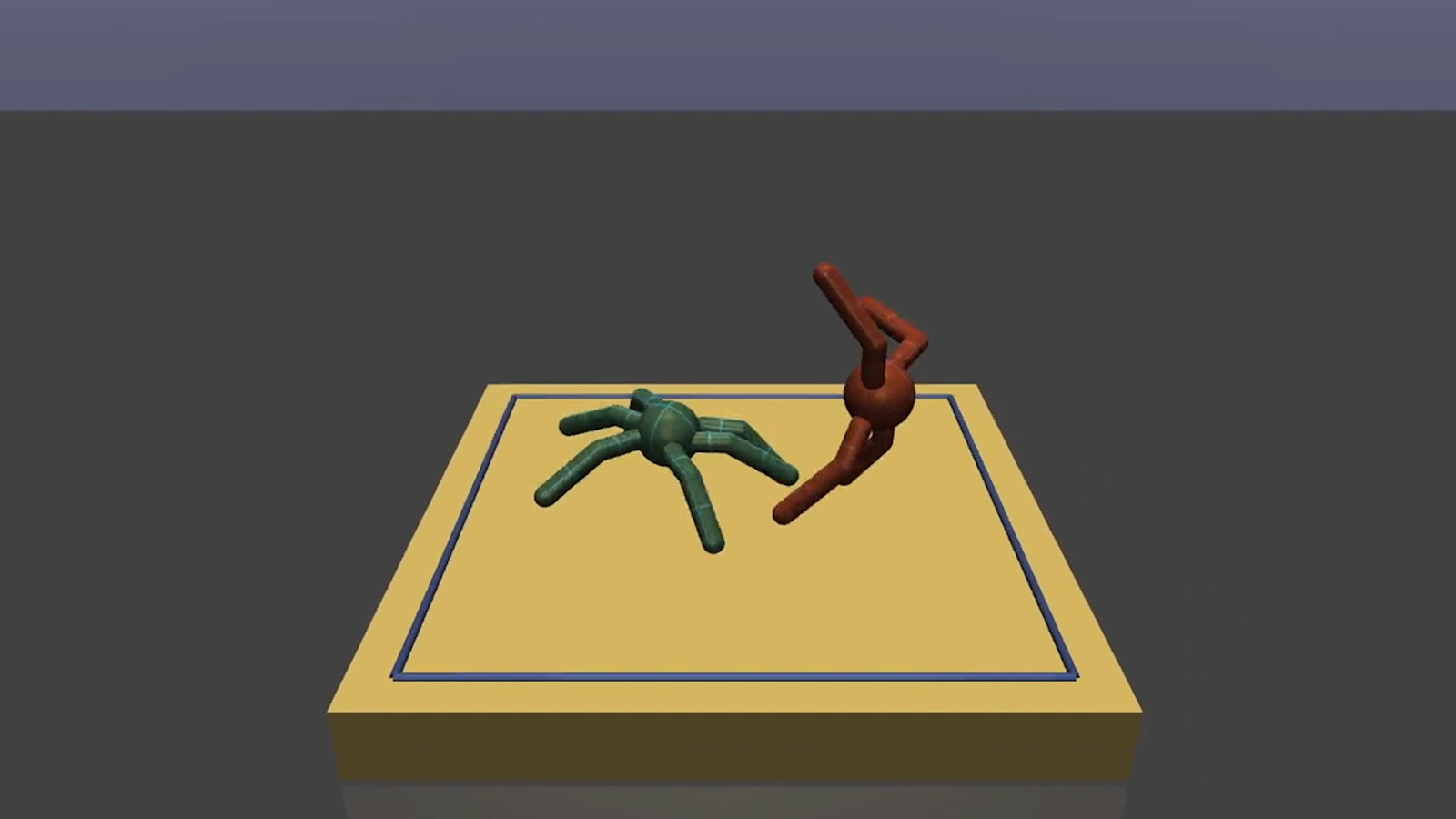

Reinforcement learning has long been heralded as a powerful method for training AI agents, providing them with the ability to learn complex tasks through trial and error. However, this often comes with the risk of agents taking potentially unsafe actions in the process. Safety Gym aims to tackle this by providing a controlled environment where safety constraints are a key focus.

Color me skeptical, but the AI community has a history of overpromising and underdelivering safety. So, is Safety Gym really a big deal, or just another tool in the growing AI toolbox? OpenAI's track record in pushing boundaries suggests this might be a genuine leap forward.

The Need for Safety Constraints

Let's apply some rigor here. The need for safety constraints in AI isn't just an academic pursuit but a necessity as AI systems continue to permeate critical areas of our lives. From self-driving cars to automated healthcare systems, the stakes couldn't be higher. Yet, many current models fail ensuring safety during the learning phase. That's why OpenAI's focus on creating these bespoke environments is essential.

What they're not telling you: Safety Gym isn't just about training better models. It's an implicit acknowledgment that the path to advanced AI isn't merely about achieving high performance. It's also about ensuring these systems don't cause unintended harm in the real world.

Why This Matters

The release of Safety Gym underscores a fundamental shift in the AI community's priorities. Gone are the days when performance alone could take center stage. Now, the focus is transitioning toward building systems that can operate safely alongside humans. But will this shift be enough to prevent the kind of mishaps that have plagued AI deployments in the past?

I've seen this pattern before in the tech world: a rush to embrace new methodologies without fully understanding their implications. OpenAI's Safety Gym, however, takes a calculated approach by acknowledging the importance of safety from the ground up, which could set a new standard for the industry.

Safety Gym is more than just a suite of tools. It's a clear signal that the future of AI hinges not only on intelligence but on safety and reliability. The AI community should take note, as those who invest in safe AI development now will likely lead the charge in this evolving field.